Some people claim they translate tens of thousands of words per day, thanks to the use of AI.

But how strong are those claims actually?

I don’t mean those people are lying. I mean “What do they actually call a translation?”

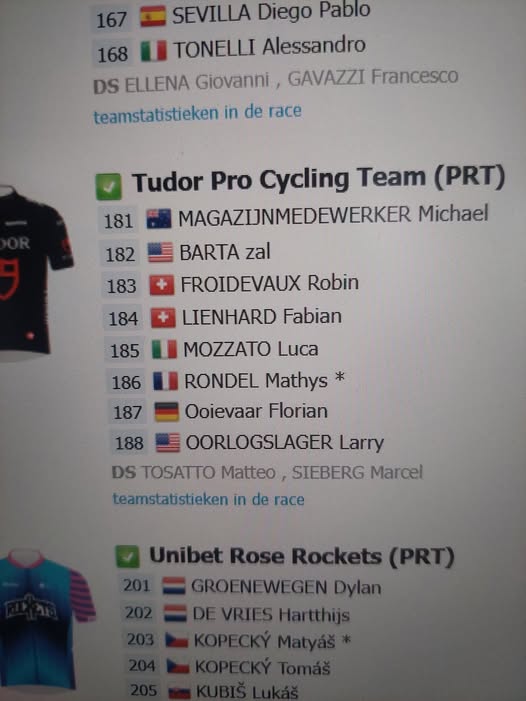

Recently I got a telling example of a job I did myself: 166 000 words in two days! I’m not kidding you. It means I did over 83 000 words PER DAY.

But here’s the thing: it were only 9 500 words to be checked, of course spread over several sentences (or translation units, as we call that in the translation business). In those source sentences words originally written in caps were replaced by tags. The only thing I had to do, was replacing the words in the translation by those tags.

That means the 166 000 words had already been translated. Only some of them had to be replaced.

I needed 2 days for that job, which means I did almost 5000 words per day. The tricky part was the tags had to end up in the right position and they had to fit in the grammatical structure. Because of that, the translation sometimes had to be rephrased.

It shows that claims of tens of thousands of words translated per day don’t tell us anything if we don’t get a detailed analyses of the work. How much if the original words have to be translated? How much can be skipped entirely? How many translation units only demand a partial translation or retranslation?

We have to be careful to take bold claims about huge translation outputs at face value, because anyhow, a human translator is not able to do much more than 2500 or 3000 words per day from scratch, with or without AI, or MT as we call it in the translation business.